If a particular GPU is consuming a significant amount of power, you may need to optimize its AI workloads. Then you can correlate this data with any other processes running during that time period for better troubleshooting. You can use the integration dashboard to visualize how much power a GPU is consuming over time and quickly identify times when it’s using consistently higher wattage.

This not only affects GPU health but can also increase the overall costs of running your AI workloads. A consistently higher-than-normal wattage could indicate that an AI workload is processing more data than the GPU can handle in the long term. For example, a GPU’s power usage measures the number of watts it is consuming to process information. Since AI workloads require extensive GPU processing power, monitoring their usage can help you make sure your hardware remains performant and cost effective. A gradual increase in both temperature and memory utilization, on the other hand, could be the result of an exceedingly demanding workload that the GPU is struggling to keep up with. For example, a sudden spike in GPU temperature could indicate a hardware malfunction, such as a broken fan.

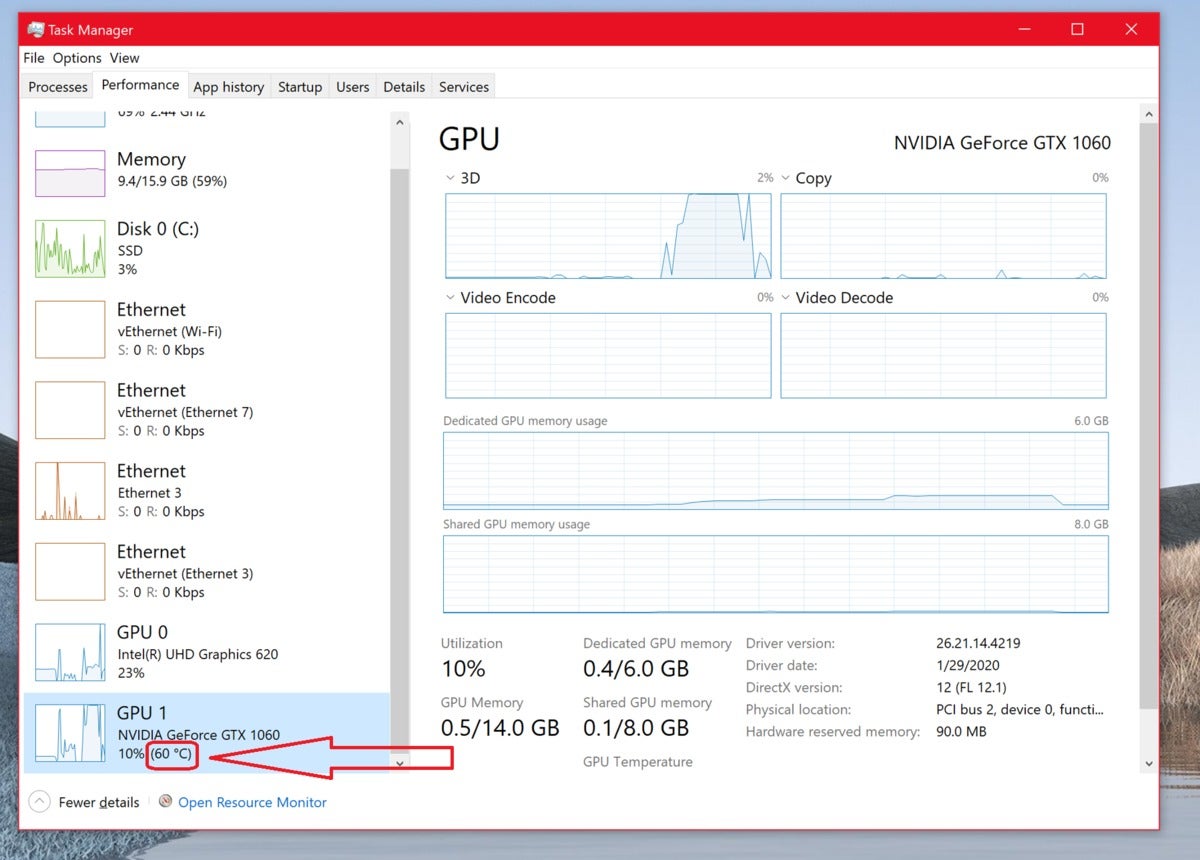

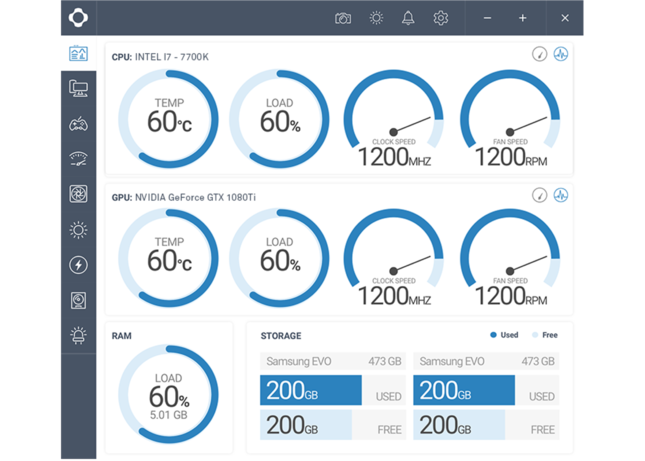

You can then use the dashboard’s GPU Temperature Overview section to determine if the issue is due to an isolated spike or a gradual increase in hardware temperature over time.Ĭomparing this data with other key performance metrics like memory utilization can help you pinpoint the exact cause of the issue. Monitoring GPU temperature can help you ensure that your workloads are not overloading your hardware during these types of high-compute tasks, which can lead to performance throttle and hardware burnout.įor example, one of our integration’s customizable monitors will automatically notify you when a GPU’s temperature exceeds the safety threshold of 85 degrees Celsius. Training AI models requires substantial computing power from GPUs, and it can quickly increase hardware temperatures-a crucial indicator of GPU health and performance. Identify the source of bottlenecks in GPU resources This visibility enables you to quickly determine how to best optimize inefficient AI workloads. You can also track the status of our integration’s out-of-the-box recommended monitors, which will automatically notify you of critical performance issues like increased memory utilization or a high number of XID errors. With the dashboard, you can review key GPU metrics like temperature, power consumption, and framebuffer usage to better understand the state of your AI stack. We also provide an out-of-the box dashboard and multiple monitors to help you track these metrics alongside trends in overall performance. Our integration offers an extensive collection of GPU utilization, performance, and process-specific metrics that you can easily customize based on your specific telemetry needs. NVIDIA GPUs power a wide variety of resource-intensive applications, so it’s important to have comprehensive visibility into each GPU instance to ensure that it is supporting workloads efficiently. Identify the source of bottlenecks in GPU resources.In this post, we’ll show you how you can use our integration to: And because collected telemetry is deeply integrated with the rest of the Datadog platform, organizations can correlate GPU performance and usage with other critical parts of their AI stack. This capability enables you to monitor the performance of all your GPU workloads in a single platform, regardless of whether they are containerized, hosted locally, or deployed in the cloud. Now, organizations can use Datadog to seamlessly collect metrics exposed by the DCGM Exporter from widely used GPU architectures, such as NVIDIA’s Tesla, A100, and Kepler series. With the rapidly growing popularity of AI-based applications, and NVIDIA’s role in supporting them at scale, an increasing number of organizations need to efficiently monitor NVIDIA’s GPU performance alongside the rest of their AI stack.Īs part of our ongoing commitment to providing our customers with increased visibility into the layers of their AI stack, we’re excited to announce our integration with NVIDIA Data Center GPU Manager (DCGM) Exporter, a suite of diagnostic and management tools for monitoring GPUs in high-performance environments. In these environments, GPUs are required because of their ability to handle parallel computing, which CPUs alone cannot do effectively. Due to their high-performance capabilities, NVIDIA’s discrete graphics processing units (GPUs) now account for approximately 80 percent of the market share for production-level AI, gaming, graphics rendering, and other complex data processing tasks. NVIDIA is well known for its computing advancements across a broad range of industries and has become the clear leader in the artificial intelligence (AI) space.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed